Information is power. It’s no different in the world of SEO. So here’s an interesting way to get more information on indexation by optimizing your sitemap index file.

What is a Sitemap Index?

A sitemap index file is simply a group of individual sitemaps, using an XML format similar to a regular sitemap file.

You can provide multiple Sitemap files, but each Sitemap file that you provide must have no more than 50,000 URLs and must be no larger than 10MB (10,485,760 bytes). […] If you want to list more than 50,000 URLs, you must create multiple Sitemap files.

If you do provide multiple Sitemaps, you should then list each Sitemap file in a Sitemap index file.

Most sites begin using a sitemap index file out of necessity when they bump up against the 50,000 URL limit for a sitemap. Don’t tune out if you don’t have that many URLs. You can still use a sitemap index to your benefit.

Googling a Sitemap Index

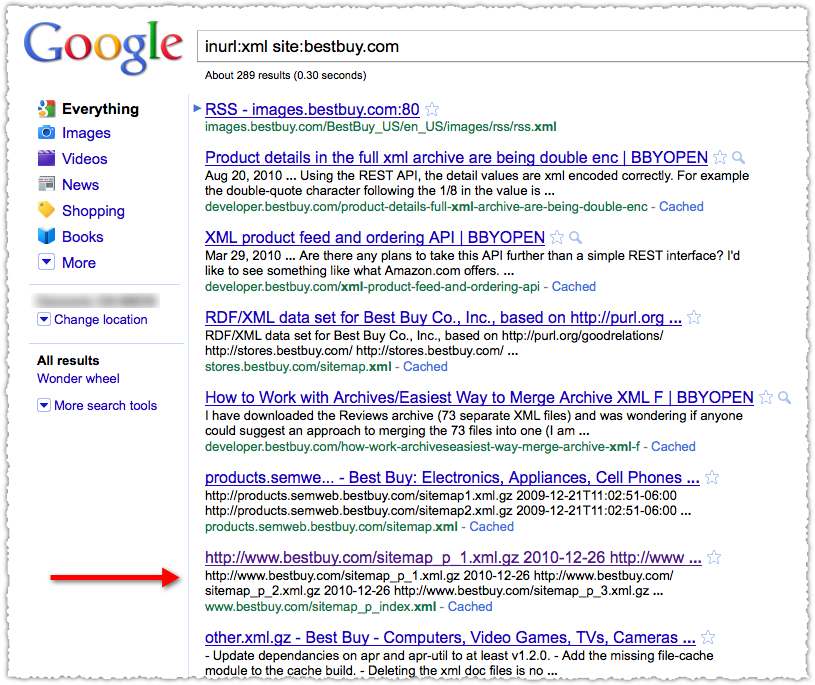

I’m going to search for a sitemap index to use as an example. To do so I’m going to use the inurl: and site: operators in conjunction.

Best Buy was top of mind since I recently bought a TV there and I have a Reward Zone credit I need to use. The sitemap index wasn’t difficult to find in this case. However, they don’t have to be named as such. So if you’re doing some competitive research you may need to poke around a bit to find the sitemap index and then validate that it’s the correct one.

Opening a Sitemap Index

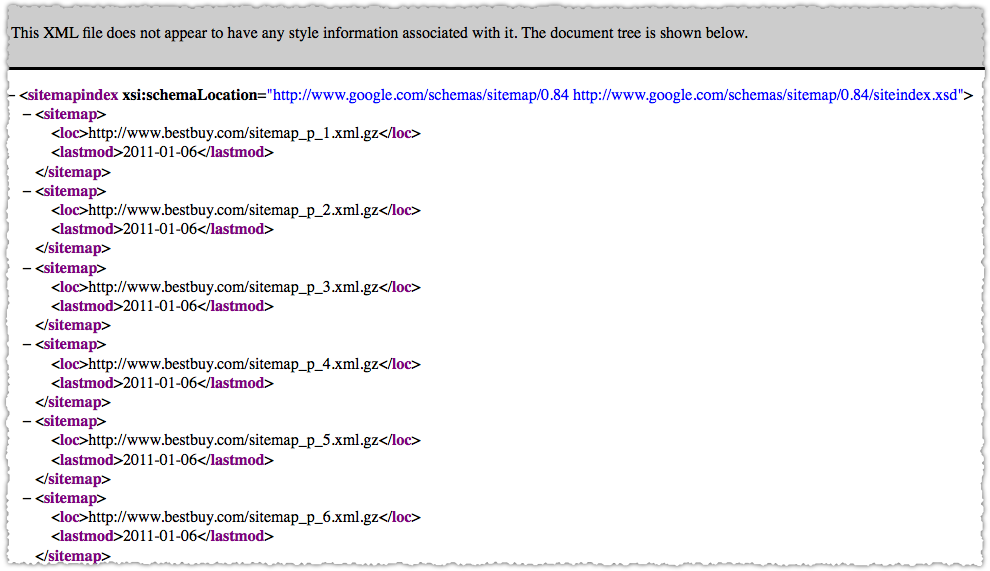

You can then click on the result and see the individual sitemaps.

Here’s what the sitemap index looks like. A listing of each individual sitemap. In this case there are 15 of them, all sequentially numbered.

Looking at a Sitemap

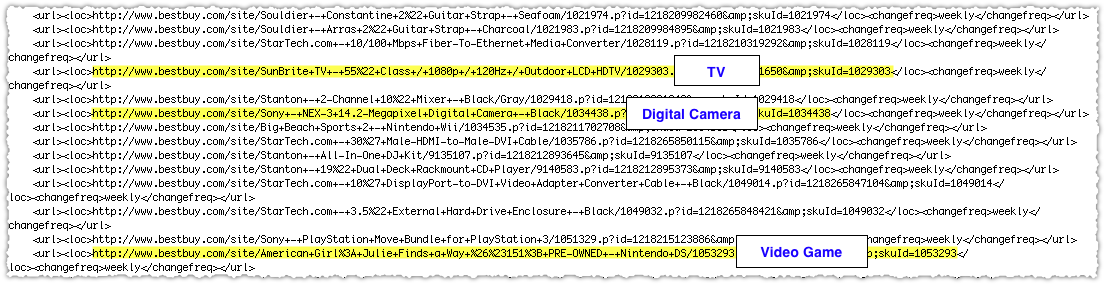

The sitemaps are compressed using gzip so you’ll need to extract them to look at an individual sitemap. Copy the URL into your browser bar and the rest should take care of itself. Fire up your favorite text program and you’re looking at the individual URLs that comprise that sitemap.

So within one of these sitemaps I quickly find that there are URLs that go to a TV a Digital Camera and a Video Game. They are all product pages but there doesn’t seem to be any grouping by category. This is standard, but it’s not what I’d call optimized.

Sitemap Index Metrics

Within Google Webmaster tools you’ll be able to see the number of URLs submitted and the number indexed by sitemap.

Here’s an example (not Best Buy) of sitemap index reporting in Google Webmaster tools.

So in the case of the Best Buy sitemap index, they’d be able to drill down and know the indexation rate for each of their 15 sitemaps.

What if you created those sitemaps with a goal in mind?

Sitemap Index Optimization

Instead using some sequential process and having products from multiple categories in an individual sitemap, what if you created a sitemap specifically for each product type?

- sitemap.tv.xml

- sitemap.digital-cameras.xml

- sitemap.video-games.xml

In the case of video games you might need multiple sitemaps if the URL count exceeds 50,000. No problem.

- sitemap.video-games-1.xml

- sitemap.video-games-2.xml

Now, you’d likely have more than 15 sitemaps at this point but the level of detail you suddenly get on indexation is dramatic. You could instantly find that TVs were indexed at a 95% rate while video games were indexed at a 56% rate. This is information you can use and act on.

It doesn’t have to be one dimensional either, you can pack a lot of information into individual sitemaps. For instance, maybe Best Buy would like to know the indexation rate by product type and page type. By this I mean, would Best Buy want to know the indexation rate of category pages (lists of products) versus product pages (an individual product page.)

To do so would be relatively straight forward. Just split each product type into separate page type sitemaps.

- sitemap.tv.category.xml

- sitemap.tv.product.xml

- sitemap.digital-camera.category.xml

- sitemap.digital-camera.product.xml

And so on and so forth. Grab the results from Webmaster Tools and drop them into Excel and in no time you’ll be able to slice and dice the indexation rates to answer the following questions. What’s the indexation rate for category pages versus product pages? What’s the indexation rate by product type?

You can get pretty granular if you want though you can only pack each sitemap index with 50,000 sitemaps. Then again, you’re not limited to just one sitemap index either!

In addition, you don’t need 50,000 URLs to use a sitemap index. Each sitemap could contain a small amount of URLs, so don’t pass on this type of optimization thinking it’s just for big sites.

Connecting the Dots

Knowing the indexation rate for each ‘type’ of content gives you an interesting view into what Google thinks of specific pages and content. The two other pieces of the puzzle are what happens before (crawl) and after (traffic). Both of these can be solved.

Crawl tracking can done by mining weblogs for Googlebot (and Bingbot) by the same sitemap criteria. So, not only do I know how much bots are crawling each day I know where they’re crawling. As you make SEO changes, you are then able to see how it impacts the crawl and follow it through to indexation.

The last step is mapping it to traffic. This can be done by creating Google Analytics Advanced Segments that match the sitemaps using regular expressions. (RegEx is your friend.) With that in place, you can track changes in the crawl to changes in indexation to changes in traffic. Nirvana!

Go to the Moon

Doing this is often not an easy exercise and may, in fact, require a hard look at site architecture and URL naming conventions. That might not be a bad thing in some cases. And I have implemented this enough times to see the tremendous value it can bring to an organization.

I know I covered a lot of ground so please let me know if you have any questions.

The Next Post: Find Keyword Modifiers with Google Refine

The Previous Post: Quora’s Not A Competition (But I’m Winning)

4 trackbacks/pingbacks

Comments About Optimize Your Sitemap Index

// 16 comments so far.

Joe // September 01st 2011

You’ve really cleared up a lot of questions I had, but I have one more.

Say you have a site structure set up so you have the home page, then you have categories under the homepage, then you have subcategories to those categories and then a few of those subcategory pages (lets call them ‘Awesome Subcategories’) have a ton of pages below them so you want to make a sitemap for each of those Awesome Subcategory pages. Would you set up a general sitemap that captures everything up to the subcategories (including Awesome Subcategories), and then a sitemap for each of the Awesome Subcategory pages?

Would there be a danger to listing the Awesome Subcategory URL in the general sitemap and then again in its own sitemap along with it’s child URL’s? Or is this duplicate URL not an issue?

AJ Kohn // September 02nd 2011

Joe,

Thanks for your comment and questions. Here’s how I’d address this.

First off, I recommend having a URL only appear in one sitemap. URLs showing up in multiple sitemaps won’t hurt you per se, but it reduces your ability to understand the true indexation rate for your site. If I understand your structure correctly you might do something as follows:

/sitemap/static (this would include the home page and other static pages like the contact, about, advertising etc.)

/sitemap/categories (this would include just the category pages that sit under the home page)

/sitemap/subcategories (this would include just the subcategory pages)

/sitemap/[sub].pages (a sitemap for each set of pages under a subcategory)

The last bit allows you to look at the indexation rate for all ‘pages’, but also by subcategory. You could go a step further and layer on a [cat] dimension as well, but your number of sitemaps might get a bit out of hand.

In the end, it’s about what information you want to get out of those sitemaps. Did that help answer your question?

Joe // September 06th 2011

Yes, it did definitely help. I guess this would kind of be a combination of the vertical and horizontal sitemaps methods mentioned on the Distilled blog (http://www.distilled.net/blog/seo/indexation-problems-diagnosis-using-google-webmaster-tools/). I was thinking it should be an either or method, but since the information I want to get out of the sitemaps is to determine the indexation percentages of different subcategories, this looks like the way to go. Now its time to build it! Thanks!

jamshid // May 20th 2013

hi,

in the sitemap xml file. There are two entries:

– http://www.marmom-marine.com

– http://www.marmom-marine.com/index.html

I think they are the same. Do i need to remove one of them?

regs

jamshid

AJ Kohn // May 28th 2013

Jamshid,

Yes and no. You should remove one from the sitemap file but you should also ensure that the /index.html version of your home page 301s to the canonical version.

-AJ

Roman Maeschi // June 18th 2013

That some clever insight, thanks for this.

I would love to implement this on our site, but how exactly would I “create Adcanced Segments to match the sitemaps”?

I don’t see the link between the sitemaps and traffic.

Many thanks for some insight.

Roman

Toby Jim // November 22nd 2013

Actually i thought ‘xml sitemap and sitemap index file’ both are same. I didn’t know about sitemap index file. After reading the post, it cleared to me that I can create such single file and embed other sitemaps into it. Thanks for helpful information.

Bogdan // December 18th 2013

Fantastic post! Such a simple, powerful idea and yet it seems that most SEOs (including myself!) have overlooked this… Is there a tool out there that helps automatically generate sitemaps by product category?

smyth jorden // July 12th 2014

Thanks for Group multiple sitemap files… But where i can create individual sitemap file, since in my website 30,000 URLs, Like Deals Page, Store Page, Category Pages or more, so i would like to make Single Sitemap with Group Multiple, but i can’t able to done.

AJ Kohn // July 13th 2014

Smyth,

If I understand what you’re asking, you want to create multiple sitemaps that are less than 50,000 URLs? That’s perfectly fine. You create sitemap files for your deals, category pages and then submit them all under the sitemap index.

Giovanni // September 28th 2015

I know the article is not new, but it helped me which were the errors on our small social network

Thanks

Gio’

AJ Kohn // December 21st 2015

Glad it helped Gio!

sandeep // January 09th 2016

i have nested sitemap index sitemap when i submit sitemap in google it shows error can any one help me please

AJ Kohn // January 16th 2016

Sandeep,

I don’t think you can have a sitemap index file within a sitemap index file. You can have multiple sitemap indices that contain sitemaps but nesting sitemap indices will likely lead to errors.

Nikos // May 20th 2016

Hi there,

I have a client whose sitemap is not indexed in Google through the site/inurl command you mention. I’ve checked his sitemap and it’s clean (not a single error page). I’ve never seen such a case. I’ve made a lot of tests using the site: in conjuction with inurl:xml and every time Google returns the sitemap of each webpage I put. Not in this case though.

Any thoughts why this is happening?

AJ Kohn // May 21st 2016

Nikos,

You could check to see if the robots.txt contains a reference to the sitemap file. If it does you can check to see if they’ve applied a noindex ‘on the page’ or via the X-Robots-Tag.

Sorry, comments for this entry are closed at this time.

You can follow any responses to this entry via its RSS comments feed.