It has been 267 days since the last Panda update. That’s 8 months and 25 days.

Where’s My Panda Update?

Obviously I’m a bit annoyed that there hasn’t been a Panda update in so long because I have a handful of clients who might (fingers crossed) benefit from having it deployed. They were hit and they’ve done a great deal of work cleaning up their sites so that they might get back into Google’s good graces.

I’m not whining about it (much). That’s the way the cookie crumbles and that’s what you get when you rely on Google for a material amount of your traffic.

Google shouldn’t be concerned about specific sites caught in limbo based on their updates. The truth, hard as it is to admit, is that very few sites are irreplaceable.

You could argue that Panda is punitive and that not providing an avenue to recovery is cruel and unusual punishment. But if you can’t do the time, don’t do the crime.

Do You Even Algorithm, Google?

Why haven’t we seen a Panda update in so long? It seemed to be one of Google’s critical components in ensuring quality search results, launched in reaction to a rising tide of complaints from high-profile (though often biased) individuals.

Nine months is a long time. I’m certain there are sites in Panda jail right now that shouldn’t be and other sites that may be completely new or have risen dramatically in that time that deserve to be Pandalized.

In an age of agile development and the two week sprint cycle, nine months is an eternity. Heck, we’ve minted brand new spanking humans in that span of time!

Fewer Panda updates equal lower quality search results.

Google should want to roll out Panda updates because without them search results get worse. Bad actors creep into the results and reformed sites that could improve results continue to be demoted.

The Panda Problem

Does the lack of Panda updates point to a problem with Panda itself? Yes and no.

My impression is that Panda continues to be a very resource intensive update. I have always maintained that Panda aggregates individual document scores on a site.

The aggregate score determines whether you are below or above the Panda cut line.

As Panda evolved I believe the cut line has become dynamic based on the vertical and authority of a site. This would ensure that sites that might look thin to Google but are actually liked by users avoid Panda jail. This is akin to ensuring the content equivalent of McDonald’s is still represented in search results.

But think about what that implies. Google would need to crawl, score and compute every site across the entire web index. That’s no small task. In May John Mueller related that Google was working to make these updates faster. But he said something very similar about Penguin back in September of 2014.

I get that it’s a big task. But this is Google we’re talking about.

Search Quality Priorities

I don’t doubt that Google is working on making Panda and Penguin faster. But it’s clearly not a priority. If it was, well … we’d have seen an update by now.

Because we’ve seen other updates. There’s been Mobilegeddon (the Y2K of updates) a Doorway Page Update, The Quality Update and the Colossus Update just the other day. And there’s a drum beat of advancements and work to leverage entities for both algorithmic ranking and search display.

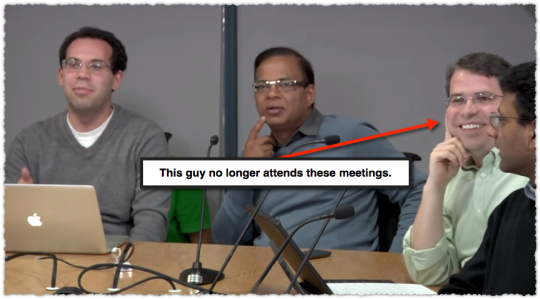

The funny thing is, the one person who might have helped boost Panda as a priority is no longer there. That’s right, Matt Cutts no longer attends the weekly search quality meeting.

As the industry’s punching bag, Matt was able to bring our collective ire and pain to the Googleplex.

Now, I’m certain John Mueller and Gary Illyes both get an earful and are excellent ambassadors. But do they have the pull that Matt had internally? No way.

Eating Cake

We keep hearing that these updates are coming soon. That they’ll be here in a month or a few weeks. There are only so many times you can hear this before you start to roll your eyes and silently say ‘I’ll believe it when I see it.’

What’s more, if Panda still improves search quality then the lack of an update means search quality is declining. Other updates may have helped stem the tide but search quality isn’t optimized.

You can quickly find a SERP that has a thin content site ranking well. (In fact, I encourage you to find and post links to those results in the comments.)

Perhaps Google wants to move away from Panda and instead develop other search quality signals that better handle this type of content. That would be fine, yet it’s obvious that Panda is still in effect. So logically that means other signals aren’t strong enough yet.

At the end of the day it’s not about my own personal angst or yours. It’s not about personal stories of Panda woe as heartbreaking as some of them may be. This is about search quality and putting your money (resources) where your mouth is.

You can’t have your cake and eat it too.

TL;DR

It’s been nearly nine months since the last Panda update. If Panda improves search quality then the prolonged delay means search quality is declining.

The Next Post: Is Click Through Rate A Ranking Signal?

The Previous Post: Why Growth Hacking Works

1 trackbacks/pingbacks

Comments About Do You Even Algorithm, Google?

// 17 comments so far.

Dan Shure // June 19th 2015

My hunch is a typical Panda SERP is being overtaken by more Answer Cards in those SERPs (information / non-transactional oriented). So I honestly haven’t noticed a lot of those types of searches get worse, because I pretty much get the answer and go. Perhaps the “10 blue links” quality has gone down, but overall SERP quality is up..

AJ Kohn // June 19th 2015

Completely agree Dan. I think that’s a large part of it. But working with sites that are smack in the middle of answer cards, you still get a material amount of traffic.

So, while the 10 blue links likely matter less I see no reason why they should be generally ignored by Google. I mean, they can’t do two things at once? Sure it’s more complicated than that, but to your point this is evidence that Google’s view of quality is less focused on those 10 blue links every day.

OP // June 19th 2015

AMEN!! By Google’s own admission their results are now outdated by almost 9 months!

AJ Kohn // June 19th 2015

Correct OP. If Panda improves search quality then search quality suffers when it’s not updated regularly. #logic

JR Oakes // June 19th 2015

My thought (though it is only a hunch as well) is that Panda is build with models derived from Deep Learning and there is necessary time needed in training and building new models (as well as testing those models). Not to mention that the pace at which ML is advancing recently, probably makes it very difficult to pick a cut-off point and current iteration of the modeling platform to go with. Like I said, only a hunch…

AJ Kohn // June 19th 2015

I can get behind that way of thinking JR. And I think there’s a good chance that Panda uses deep learning of some sort. But at some point you just have to ship it. Done is better than perfect.

Frank // June 19th 2015

“You could argue that Panda is punitive and that not providing an avenue to recovery is cruel and unusual punishment. But if you can’t do the time, don’t do the crime.”

Who’s crime? Google’s almighty laws that are passed without prior notice, or input from the user, ya a real crime alright. That’s just total BS, you know it, i know it, everyone knows it. Google has screwed thousands of websites for no reason other than they could.

Google is the 3 million pound fascist gorilla, and you make excuses for them, give me break.

AJ Kohn // June 19th 2015

Sorry Frank but I won’t give you a break. Sites are not entitled to traffic from Google. They just aren’t.

Here’s an analogy to make my thoughts more clear. When you go to play laser tag somewhere there are some rules. If you violate those rules you don’t get to play laser tag there anymore. Maybe you don’t agree with those rules. That’s fine, but those are still the rules and when you break them you’ll have to go find another place to play laser tag as a result.

Yes, Google is the best place in town to play laser tag. But Google doesn’t go out of its way to screw websites for no reason. The rules and algorithm updates are there to improve search results for users. You want to be mad at someone, be mad a users. They vote with every click and Google just follows their lead. Because happy users lead to increased usage which leads to profit.

Are there some false positives when Google makes an algorithm change? Sure. But in aggregate the results get better. So the needs of the many outweigh the needs of the few. That’s life. It might not be fair but fair is where you get cotton candy.

The thing is, I might have paid for that game of laser tag I got tossed from. But with Google, you’re not paying them a dime. It’s a free source of traffic. So you’re complaining about losing something you were getting for free?

I actually think the ire that you and others express can be tied to loss aversion, which shows that losses are far more powerful psychologically than gains.

Hey, I empathize. It sucks to not play the most bad ass version of laser tag on the planet. But I can’t sympathize.

Mary // June 20th 2015

Nowadays, I see small updates almost every two months, small updates, but they change something every time.

I have many websites and blogs, some of them being older than a decade and those are the best indicator that shows me that a small update took place overnight.

The only thing that I don’t like is the fact that after any small update, Googlebot has a delay in indexing new pages.

AJ Kohn // June 20th 2015

Mary,

True. There are plenty of smaller updates that pass relatively unnoticed. And I don’t expect Google to let us in on each and every one of them. But Panda and Penguin are more easily identified because of their magnitude. And both have fairly specific pathways to remediation. So letting these updates go for so long is both annoying for sites but troubling for search quality.

Grant // June 20th 2015

“This is akin to ensuring the content equivalent of McDonald’s is still represented in search results.”

Love this. The challenge of defining quality is in understanding the many user signals that push content higher even if it is the eqivalent of junk food. Therein lies the massive challenge Google has in leveraging a ‘crap ton’ of data to refine the definitions of quality in “realish” time. I’m actually surprised Panda is as frequent as it is when you consider the data crunching that needs to happen on a page by page, site by site and vertical by vertical basis.

We got out of the Panda penalty box last year and have committed to never going back! Agree 100% “if you can’t do the time, don’t do the crime”

Cheers

AJ Kohn // June 20th 2015

Thanks Grant and congratulations on both getting out of Panda jail and your commitment to never going back.

The definition of quality is very nuanced and, in many ways, very personal. I wrote a bit about that at the beginning of 2011.

But in a nutshell, the best selling beer in the US is Bud Light. I probably wouldn’t drink this if you paid me. Well, if you paid me handsomely I would but you get my drift. Instead I’m drinking something from Stone Brewing or Bear Republic. Quality and taste are subjective.

So not only do you have the ‘crap ton’ of data to deal with (which is serious stuff) you also have to figure out this ever-moving target of quality. It’s super difficult but … if you start down that road you can’t just stop.

Grant // June 20th 2015

Cheers AJ

I inherited the Panda jail sentence, but have smart guys on my team that helped dig out (The Shawshank Redemption of Search) 🙂

Great analysis from 2011! Agree with the aggregated personal interpretation of quality that manifests in Panda and other search updates. Why I skew towards persona based approach to research of quality; signals, queries, and ‘content that connects’ with satisfaction of user intent with understanding of user context.

Verbose way of saying “understand what your audience(s) are looking for, and then give it to them.” SEO 2015 is fundamentally an echo of Cutts’ directive of putting the user first.

Cheers

Yair Spolter // June 20th 2015

Thanks for reiterating our communal frustration, AJ. Just wondering why you only mentioned Panda and not Penguin, or did you mean both?

AJ Kohn // June 21st 2015

Yair,

I’m less concerned with Penguin because that algorithm isn’t applied at the site level but usually on a keyword level. So while the argument remains (somewhat) the same I don’t think it has nearly the impact of Panda on overall search quality.

Prasanna // June 22nd 2015

Greetings AJ!

I guess Google has stated that they’d be updating Panda every month, periodically. So I’m assuming it’s being rolled out consistently. (no updates though!)

Now here’s where the catch might be!!

Mobile.

They’d be serving the same content to Mobile & Desktop users & they’d be monitoring the signals they receive; and with ever increasing number of users..well it might be getting difficult for them to actually alter the algo!

With context & no. of queries…the type of content being created ..? feww!

I really am not bothered about the update but am excited to know how they’ll make this update, if any!!

AJ Kohn // June 22nd 2015

Prasanna,

No. Google representatives have gone on record to state that Panda still has to be run manually. So it’s not just running in the background.

And the interaction with the content would only slow the refresh down if it was part of the Panda algorithm. Outside of the human raters who might create a heuristic or training set of data, I haven’t seen this to be the case.

No doubt that Panda isn’t easy to run in its current state. But that simply means that it hasn’t been assigned resources (i.e. – it isn’t a priority) to make it a more scalable signal.

Sorry, comments for this entry are closed at this time.

You can follow any responses to this entry via its RSS comments feed.